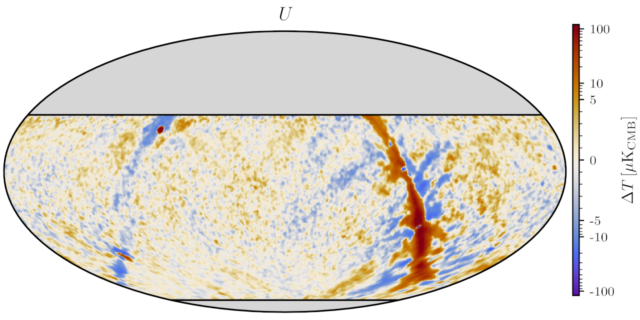

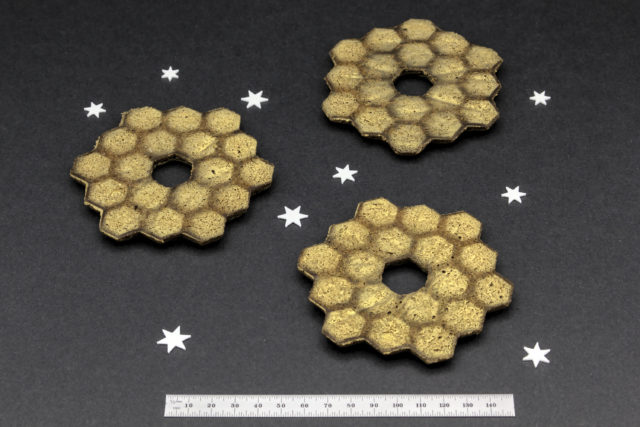

Earlier this week, this year’s BICEP / Keck collaboration meeting was held at Harvard (we build, operate, and analyze data from cosmic microwave background telescopes installed at the South Pole). As a collaboration member at the host institution, I decided to bake some BICEP-themed cookies for a coffee break at the meeting, arriving at the idea of making butter cookies in the style of Pepperidge Farm Chessmen cookies.

Recent Posts

Archives

Categories